Creating a VI (Virtual Infrastructure) Cluster in VCF 4.0.1.1

I originally wanted to learn more about VMware Cloud Foundations but never had the time to. I recently (ahem COVID) found extra time to try new things and learn with my home lab. For the setup, I used the VMware Lab Constructor (downloaded here) to create VCF. After...

Read More ⟶

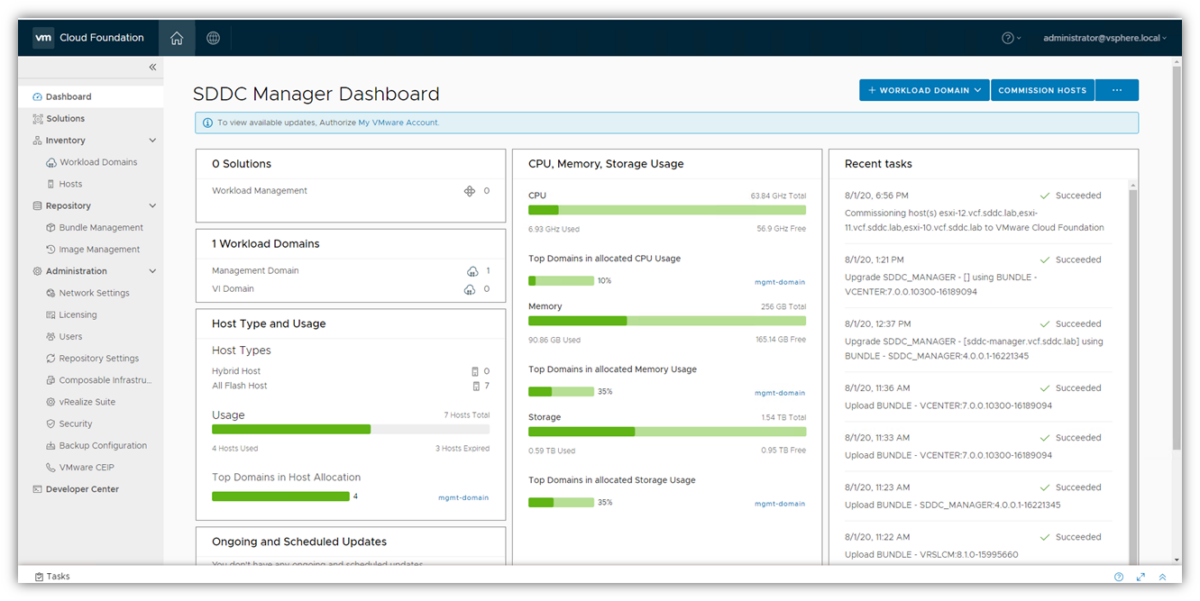

VMware Cloud Foundations 4.0.1: Problems with SDDC Manager refreshing

I've been doing some studying on VMware Cloud Foundations 4.0.1 and have it running in my lab. It seems a bit finicky at times I've noticed. One of the issues I've run into so far is that when I added 3 more hosts, everything seemed to be fine. I then wanted to add a...

Read More ⟶